This was definitely one the toughest day of not only the dashboard week but also the data school generally.

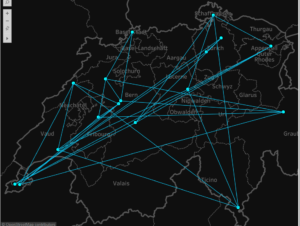

Our task for today was to get public transportation data of a city (or a country in my case) and make a viz out of it. We would have to find a suitable API, scrape our own data, clean it up and make a viz (much easier said than done.

After searching online for Switzerland’s public transportation, I found an open API which I believed would be useful.

Initially, I called an API to find the different stations in the 20 biggest cities in Switzerland. This was useful as it also gave me the exact locations of each of the individual stations. I was not able to get more information hence I decided to use a different API.

For the second workflow, I decided to use a new API which gave me stations about trains traveling between two stations.

I used an ‘Append Field’ tool to match the different station together, after joining it to the API field. The formula tool was used to basically changed the URL so it would give the train line data between two stations.

After parsing the JSON file it was pretty much the matter of cleaning the data and structuring it properly.

One of the many challenges I faced was hitting the limit of API requests. The only solution was using a VPN and requesting it again. After trying out multiple VPN services I ended up using ‘Tunnel Bear’ and ran the workflow. Since I did not want to face this problem again, I decided to make this into an output file.

I used the output file and figured out a way to create a path between the two stations so I can connect the stations together.

By assigning each of the station a path ID, I was able to make path between them.

I was also able to get the duration time between the two stations, however, due to the extensive cleaning process in Alteryx and the limited data I had in the API, I was not able to extract any more data.