API (Application Programming Interface) is an intermediary that allows two applications to talk to one another. In other words, it works like a bridge between two programmes that would not be able to communicate otherwise. In the data world, it makes retrieving and in turn analysing data from websites easier. Even though getting datasets via API might sound complicated, it actually is not that bad! Once you learn the process for one case, others are more or less the same. All you need is an API URL path from a website of your choice and Alteryx.

A universal start of an API workflow on Alteryx looks like this:

1. In your Text Input tool, you want to create a field named “URL” and paste the copied API URL in the first row.

2. The second tool – Download – will then download the data for you.

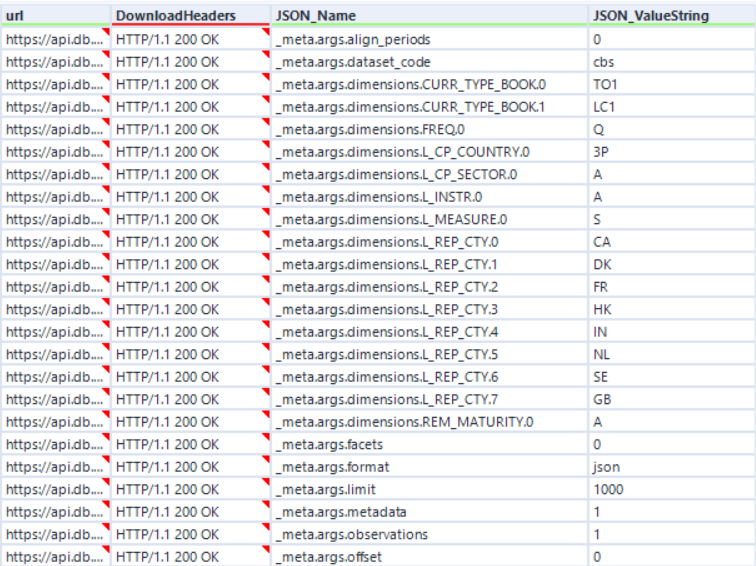

3. You then need to use the JSON Parse tool to convert your data into a readable format. When configuring this tool, make sure you select the right JSON field (“Download Data”). You should now see something like in the screenshot below.

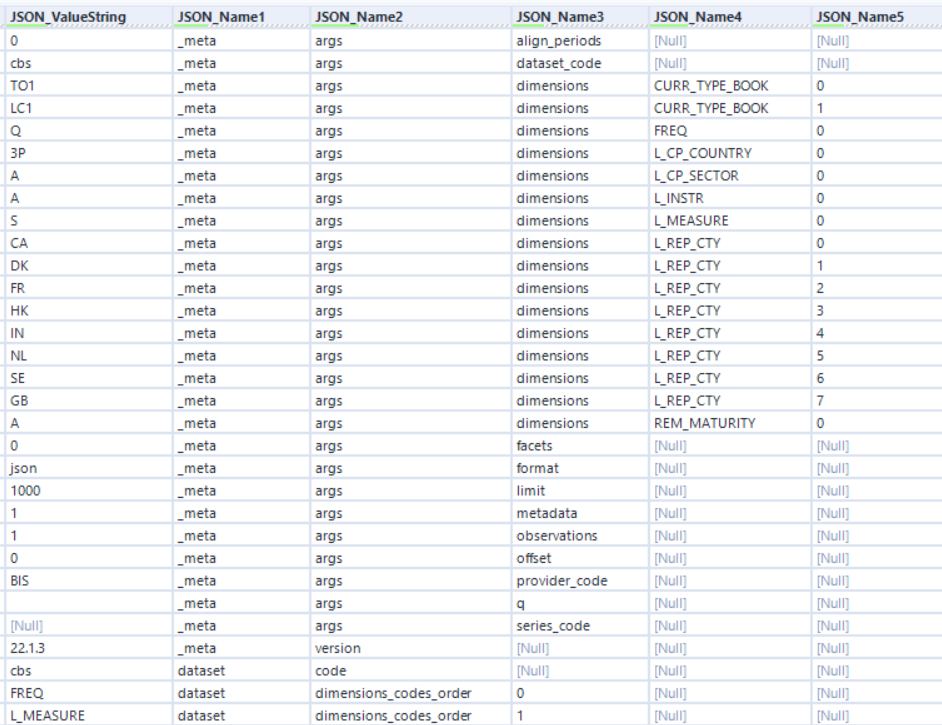

4. In the next step, remove “url” and “DownloadHeaders” fields with the Select tool as we no longer need them. This step is not necessary, but helps to keep your table tidy!

5. Finally, use the Text To Columns tool to split “JSON_Name” field into separate columns to be able to understand the data. The following table is then what you should be left with.

From this step, things may differ based on your dataset. In this specific case, I first had to focus on “JSON_Name1” that told me which rows are about metadata and which ones - about data. Understanding this helped me to transform my table into the right format. This part of data prep involves a lot of Preparation, Transform and Join tools, but because it is fairly easy and also different for each case, I will skip these steps in this blog post. Make sure you know, more or less, what columns your dataset should contain, and analyse the columns after the split (step 5) well. This will ensure you know what to do next.