What is Snowflake?

Snowflake is primarily a cloud-based data warehouse, although it can be used as a data lake if required. It allows you to have multiple databases from multiple locations.

Data Warehouse - a collection of databases, storing lots of different types of data from various sources. This becomes an almost library of data sources. Each person can 'own' multiple warehouses.

Database - an organised collection of tables that contain rows and columns of structured data.

Data Lake - a repository where structured and unstructured data is stored before it is brought into a data warehouse. An almost 'dumping ground' of data.

There are 3 platforms that Snowflake supports: AWS (Amazon Web Services), Azure or GCP (Google Cloud Platform). When you sign up for Snowflake, you have to choose what cloud platform you're going to deploy it on. Snowflake also is Cloud Agnostic - data can be duplicated and/or spread across multiple platforms and regions.

Why do we use it?

Snowflake is built specifically for cloud use and so there is no hardware or software to install, configure or manage. The nature of the cloud means that it overcomes some of the problems that can be common in other hardware-based data warehouses, for example performance and speed. If you want to load data faster, or run a high number of queries, you can scale up/out your virtual warehouse to accommodate these requests.

Scaling Up - This is the process of increasing the size of your warehouse by upgrading the RAMs and using a more powerful single server, allowing you to run large queries faster.

Scaling Out - Instead up upgrading an existing machine, this is the process of increasing the number of machines. This allows you to divide the work across multiple resources and carry out more tasks at the same time.

Furthermore, the flexibility of Snowflake means that you can increase or decrease the size of your warehouse based of your requirements at that time and only pay for the time you have used.

Snowflake also makes your data accessible. You can store your data in a number of availability zones using AWS, Azure or GCP. Whilst these servers can be held in a physical warehouse, you do not need to be in close proximity to store and access your data and can use any of the availability zones regardless of location. However, it is important to note that deploying data from the other side of the world will cause delays and so it makes sense to choose the zone closest to you. Often companies will duplicate their data and store it across multiple zones.

Snowflake's data-sharing capability allows companies to share tables to users outside of the account, for example a supermarket sharing sales data to their suppliers. This can be done using manual CSV files which often led to duplication of data and wasted labour. Sharing a limited view of live transactional data eliminates these problems and is unique to Snowflake

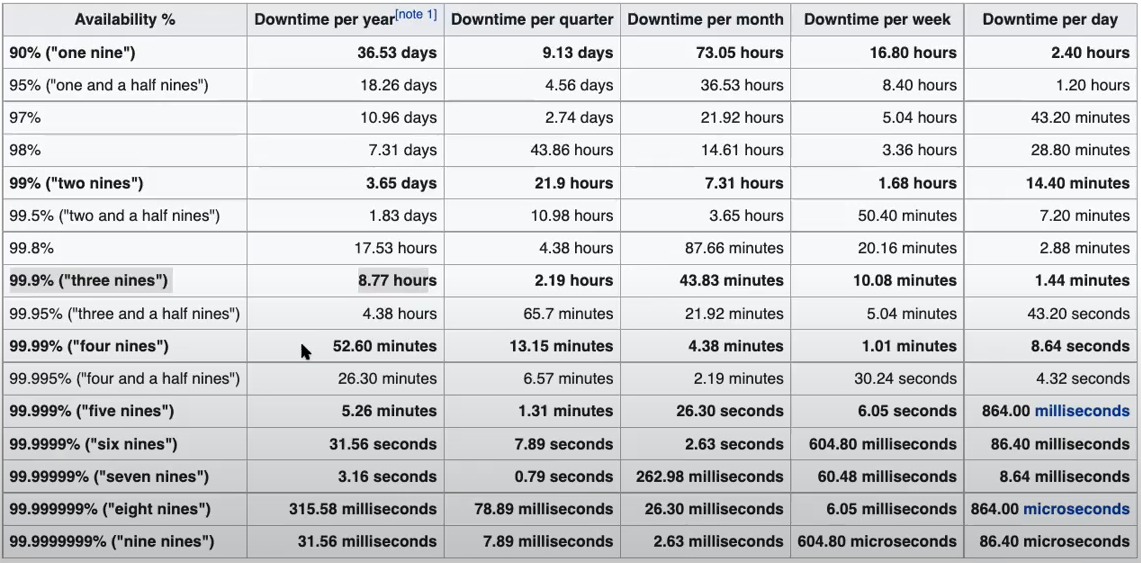

Finally, Snowflake works around the concept of High Availability. This aims to ensure an agreed level of operational performance (the uptime requirement) for a higher than average period of time. The aim of this is to prevent system crashes and loss of data (downtime). This is calculated on the basis of 'the nines':

Snowflake is designed in a way to give you the maximum number of nines whilst remaining cost effective.

ELT vs ETL - what's the difference?

ETL is a 3 - step process:

- Extraction - getting your data out of a source system. For example, a transactional database, CRM systems or website logs/activity.

2. Transformation - blending, shaping and trimming your data to fins valuable insights. This is where filters and aggregations come in.

3. Load - load into your database or Tableau

ETL is used by older systems due to limited capacity and processing power. However, Snowflake favours using ELT, allowing you to load your data to the cloud at a minimal cost and do your transformations there.

The Interface - What's what?

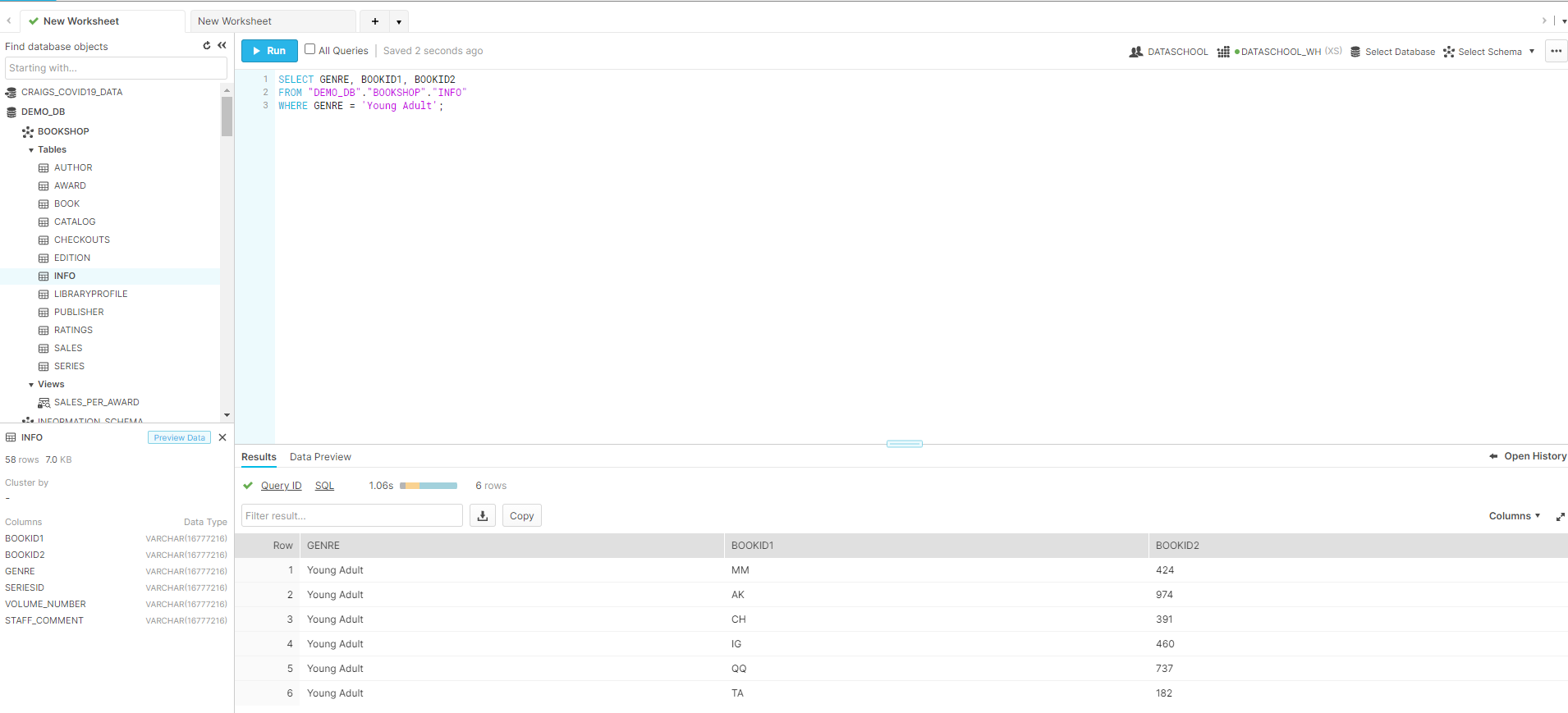

Worksheets - this is where you will write your SQL queries. Think of each sheet as you would an excel or Tableau worksheet.

SQL (Structured Query Language) - this is a computer language that allows you to transform your data within Snowflake

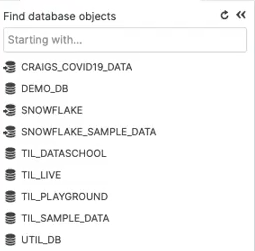

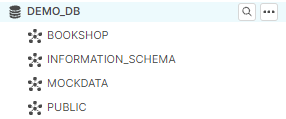

Databases are listed on the left hand side, ideally you would have a different database for each data source that's coming into the data warehouse. You can set roles within Snowflake and subsequent permissions for each role.

Schema - A collection of Tables that contain data that relate to each other. A Database is a collection of Schema.

Snowflake offers three types of tables, Temporary, Transient & Permanent:

- Temporary tables:

Only exist within the session in which they were created and persist only for the remainder of the session. They are not visible to other users or sessions.

Once the session ends, data stored in the table is purged completely from the system and, therefore, is not recoverable, either by the user who created the table or Snowflake.

- Transient tables

Persist until explicitly dropped and are available to all users with the appropriate privileges. These are specifically designed for transitory data that needs to be maintained beyond each session (in contrast to temporary tables)

- Permanent Tables (DEFAULT)

Similar to transient tables with the key difference that they do have a Fail-safe period. Which provides an additional level of data protection and recovery.

Views - these are a result of a SQL query and can be viewed as in in a table.

Databases, Tables and Schema are Snowflake Objects.

Transforming Data within Snowflake

Snowflake also has the capability to transform data and generate views within the Snowflake server using ELT. This can be done by writing SQL queries within the worksheets.

The above shows a basic SQL query on a mock dataset that returns the book IDs for those with the genre 'Young Adult'. This is a simple manipulation of the data and does not create a view, this would require the CREATE VIEW command.

Connecting to Snowflake in Tableau Desktop

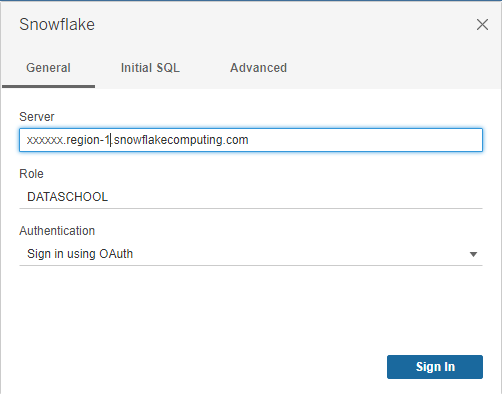

Snowflake is listed under the 'Server' section of the Tableau connection pane. Clicking on Snowflake will open the below window:

The server will be the URL address of your Snowflake (details have been blocked out for security), leave out the https prefix.

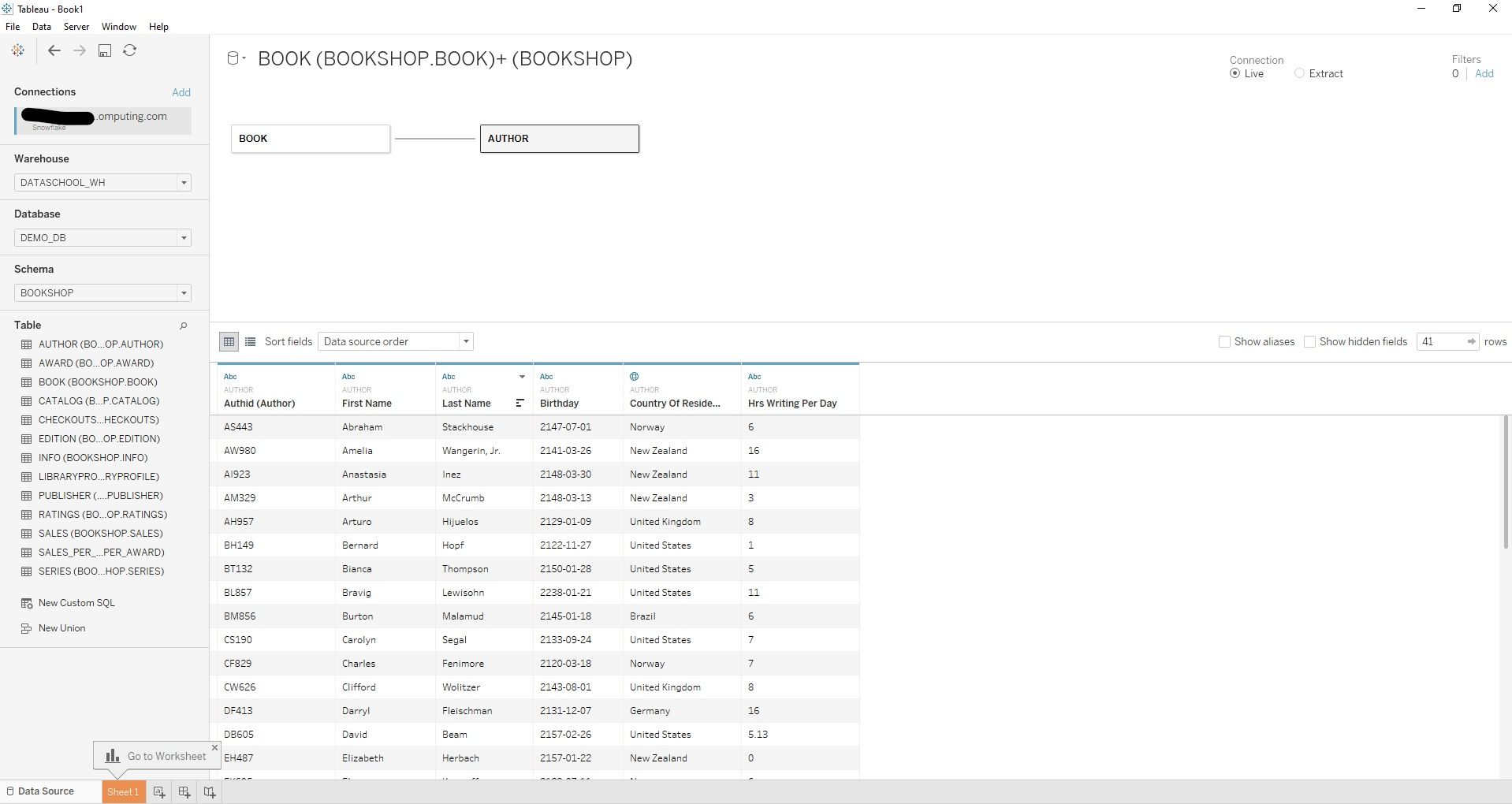

This will open an authentication window where you will have to re-enter your credentials. Once this has been done Tableau will ask you to select your Warehouse, Database and Schema from which you can select and join your tables.

This will automatically establish a live connection however an extract can be generated. Remembering each connection or query will cost and so extracts can be a cost effective way to build dashboards and write calculations before switching back to live.

To connect to Snowflake using Alteryx, the ODBC driver is needed. This can be found via the Download option on the Help icon and should be done from the Snowflake Repository.

Although this blog is fairly lengthy, it only just scratches the surface of the features and capabilities of Snowflake. There are plenty of resources on the Information Lab YouTube and our blogs that provide more information. The Information Lab also runs online training sessions on a weekly and monthly basis, some of which will cover Snowflake.