I think that Dashboard week has finally broken me. This morning we were given the task to web scrape information about UNESCO world heritage sites from Wikipedia. We had to scrape a general overview table as well as tables for each individual country.

At the beginning I was pretty optimistic about the challenge as the html didn’t look too complicated. I was very naive.

My plan was to create a workflow in Alteryx to scrape the sites for Austria and then once I had that working, I would bring in the other countries. This all was great up until I brought in those other countries.

My plan was premised on all of the tables for the different countries being structured the same. And while they looked very similar in the browser, the html had small but significant differences.

I tried creating some separate branches in my workflow to capture a couple more countries, hoping they more capture more similarly formatted countries. It worked a bit, but not enough.

As time was getting on, I had to work with I what had, and what I had was very bad data. Some of the UNESCO World Heritage Sites had multiple locations, some were duplicated and some sites weren’t sites at all, ‘sport’ and ‘water’ for example.

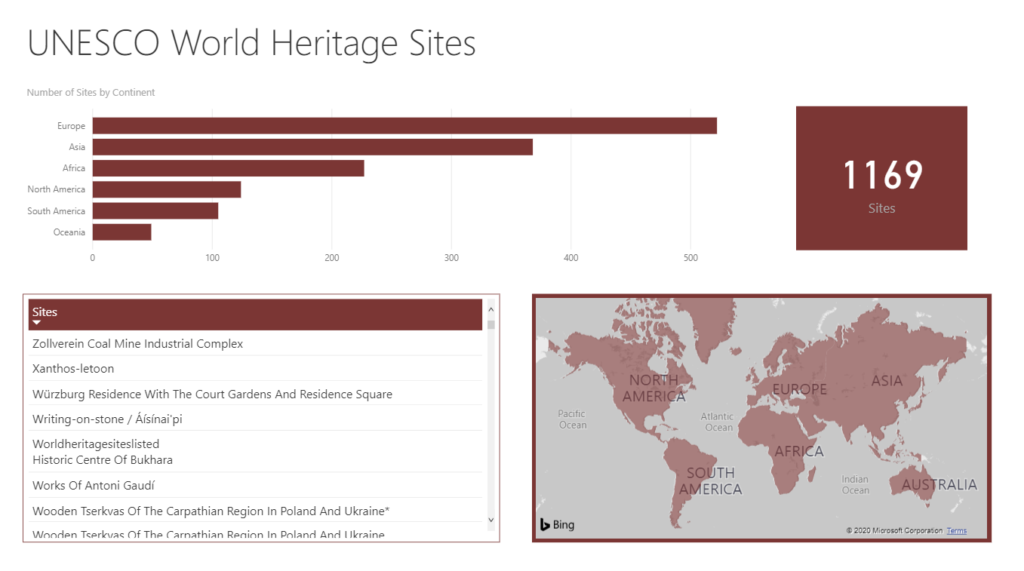

I tried to make do with this very bad data by mapping on the continents for each site hoping that there would be fewer mistakes at a higher level of granularity.

With a little bit of time left, I brought the data into Power BI, the second part of the challenge. Because I didn’t have any measures in the data, I had to work with a distinct count of the site names, which allowed me to show the number of sites in each continent.

It’s a shame that my data didn’t turn out as planned. It would have been nice to explore more of what Power BI can do.

My first Power BI Dashboard: