Ok, so this is week is going to be tough…

That is, at least, if the events of yesterday are anything to go by. Although the task itself wasn’t too bad, the hectic 4:30 rush to finish my blog and publish my Viz was. What I had initially intended to be a coherent account of the challenges of the day turned into a manic stream of consciousness, to the point where no blog at all would have been equally valuable, if not preferable…

So my challenge for day 2 is to better manage these 6 or so hours we get and produce a more substantive blog to accompany whatever Viz I muster.

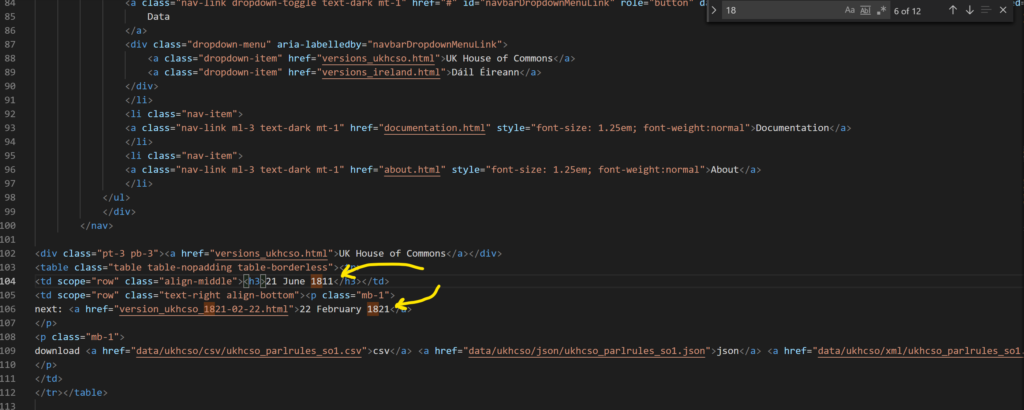

Today we’ve been tasked with scraping all of the Parliament Rules in the Parliament Rules Database, getting it into a Vizable format, and making a Viz from the result. Web-scraping is one of my favorite Alteryx topics, so I’m pretty chuffed by this challenge, even if the Data itself is a bit dry… As soon as we looked at the website it became clear that we would have to create a Batch Macro to feed all of the individual URLs for the web pages we were wanting to scrape. This was relatively easy, as date formats are fairly easy to identify inside HTML code:

Once those were extracted, I then needed to feed that into a Macro to update the URL within that Macro.

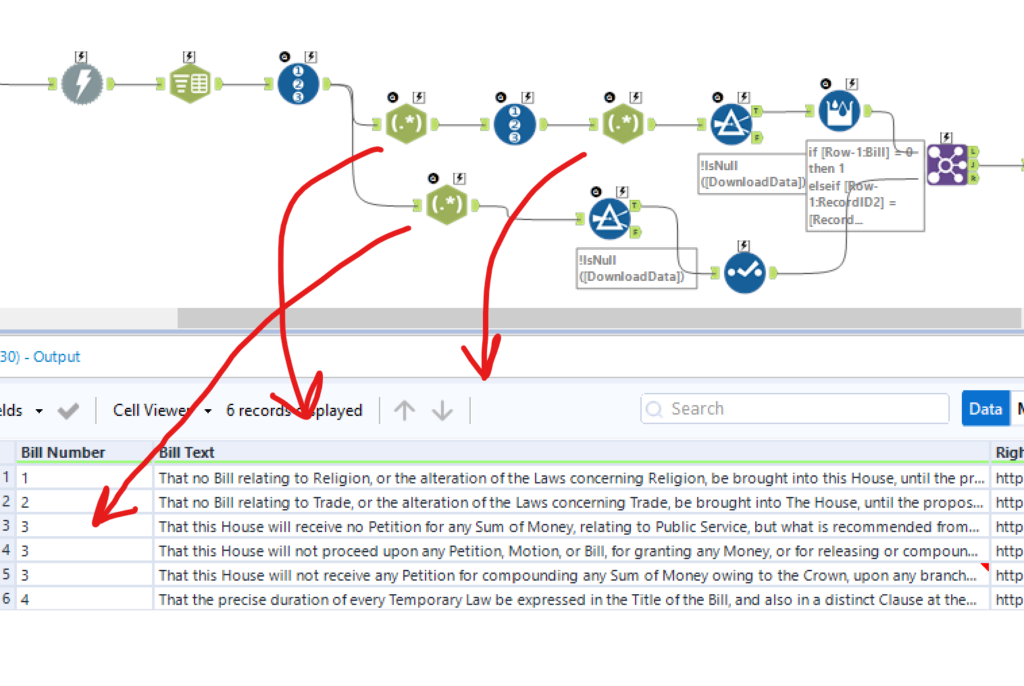

Then, using the download tool, and a bit more Regex, I managed to isolate the Text of the Rules in separate rows:

However, I ran into a problem whereby certain rules were split over multiple rows (from my Tokenizing Regex output) meaning that I might get 6 rows for 4 rules, with no way of knowing which rows belonged to which rule.

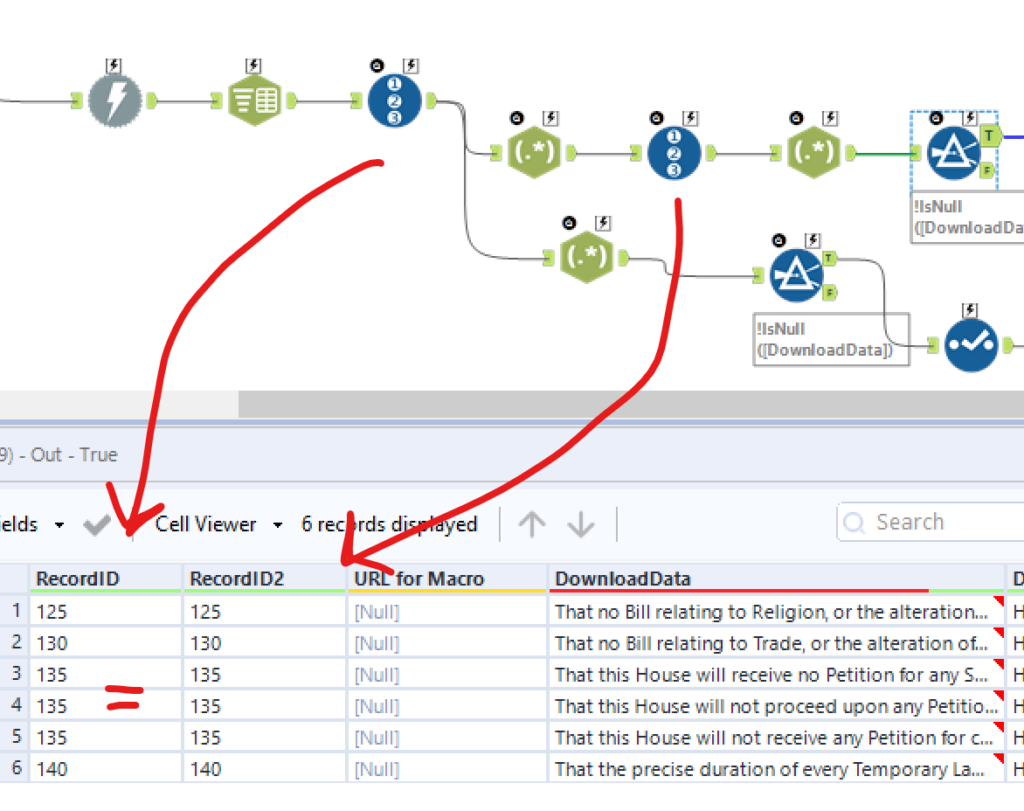

I thought of a nifty solution to this problem using a couple of Record ID tricks:

I broadened my original Regex condition to grab everything the entire Rule on a single row, and then, after adding a new Record ID, re-ran my original Regex condition to extract the individual lines. This meant that once I had filtered out the null results, the Record IDs matched whenever the text belonged to the same Rule.

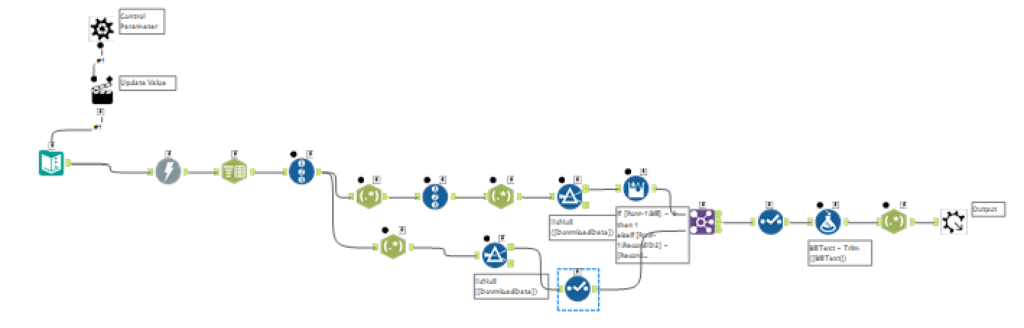

The next job was to configure the new workflow to accept the output from my other workflow as a Batched Input, meaning that when I ran the outer workflow, all my results would appear:

I now had my output, and could make a start on my Viz, which presented an entirely new challenge, namely, what on earth is there to Vizualise?

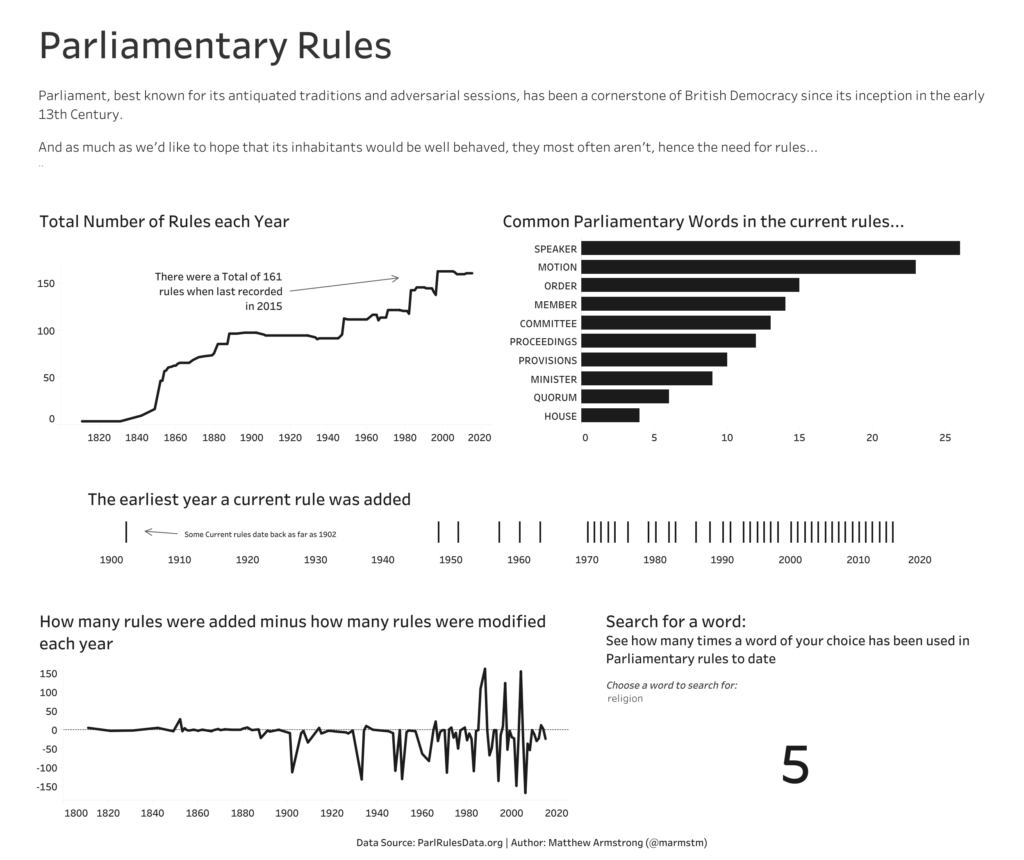

In the end I settled on a simple Viz, outlining some of the most common words as well as some more general statistics about the rules.

Here’s how it turned out:

Overall, this has been a much more successful day when compared to yesterday, a trend I hope, but do not expect, to continue for the rest of the week!