UPDATE: Craig Bloodworth has created a data web connector that will import data from ParseHub directly into Tableau. You still have to wait until ParseHub has extracted the data on its servers, but you do not need to download the CSV file if you connect directly with the data web connector found here: http://data.theinformationlab.co.uk/parsehub.html

Although we’ve come a long way in terms of analyzing data, one of the most challenging tasks for an analyst is still finding a way to collect and access clean and governed data. I often find myself reading an article or coming across a website and thinking, “hey, I could do something really cool with this information.” But the question is, how do I actually pull that information out, especially when the information would constitute a massive data file? Enter ParseHub.

ParseHub is a data extraction tool that gives you a lot more control than services like Import.io in pulling your data from dynamic websites. To get started, head over to their main page at https://www.parsehub.com/, sign up, and download the program (available for both Windows, Mac OS, and Linux). The program is free to use unless you sign up for one of their premium services, which gives you faster extraction rates and the ability to strip more pages than the regular free option. Once you’ve got the program installed, I HIGHLY recommend going through their tutorials, at least to get a basic idea of how the program runs through its commands on a website. They’ve also got more written and video tutorials on their website that are quite helpful.

For this post I’ll go through the process I went through to extract data from www.disabledgo.com, a website where people with various accessibility needs can search for venues and services that meet their needs. I used this dataset to create an Alteryx app that generates a list of accessible venues as a Tableau dashboard. I do plan on publishing soon, once I’ve added in some geodata to also launch a map with the list of accessible venues (fingers crossed!).

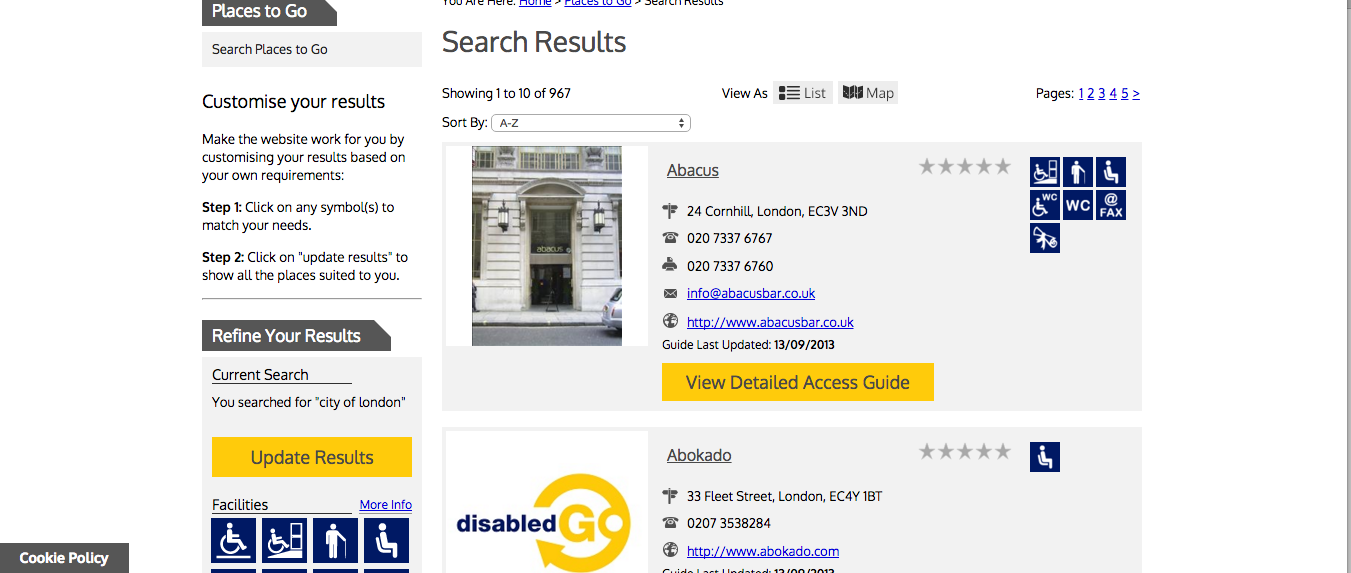

The first thing you do when you open up the app is browse to the first page of the website you want to extract data from. As long as you have the Browser arrow tool selected, you can navigate around and use ParseHub as a regular browser until you get the view you need. Lists tend to work best for extracting data, so I recommend doing a search for whatever data you need and bringing it up as a list of results before starting your data extraction commands. It’s okay if your results are on several pages, ParseHub has an excellent way to navigate through them all, as you’ll see in a second.

Search results obtained from www.disabledgo.com in list form

Search results obtained from www.disabledgo.com in list form

Once you’ve got your first page, the first thing you want to extract is the first column of data that you would have in your dataset. In my case, I am extracting the names of the venues first. I can do this by choosing the select tool, then clicking on one of the titles of the venues.

My selection only picks up on the title of the venue that I clicked. However, if I hold down the shift key and click on another title, ParseHub will recognize a pattern and will pick up on the other names on the screen.

Once I’ve got my names selected, I then want to tell ParseHub to create a list from those names and extract them in my data set. I can do this using the appropriately named list and extract tools.

When I do this, you can see how things update in the Sample view and how ParseHub is responding to my commands. You can also switch the view to see how things look in CSV format.

Next, I want to extract the address and telephone information for each venue. I also want ParseHub to recognize that this information is part of each individual venue. Therefore, rather than the select tool, we use the relative select tool and first click on the venue name, then the associated address or telephone number to link it to the name. As before, if ParseHub doesn’t select all venues and addresses/numbers automatically, we can use the shift key to tell ParseHub to pick up on a pattern so that it will do a relative select for each venue and associated address/telephone number.

One thing to keep in mind is that commands you do in ParseHub are done in “nodes.” For example, under my node labeled “List name,” I want ParseHub to run my relative select command for address and telephone information, but I also want it to extract the data as part of the venue name data I’ve already extracted. That’s why you need to make sure you click on the appropriate node before setting up your commands.

The last thing I want to do is extract the accessibility information for each venue. In this case, my information can only be seen when I hover over the icons next to my venues. ParseHub can still extract this information if we change the settings under the extract tool to extract an “alt Attribute” instead of “text.”

If I run my data extraction now, ParseHub will give me all the information from this first page of results. However, I want ParseHub to run my same set of commands on EVERY page of results. I can set this up with the navigate tool.

The first thing I do before using the navigate tool is click on the page node to indicate to ParseHub that I will be selecting something from the main page view on every page of results. I then select the page numbers at the top using the select tool and holding my shift key to get all the page numbers selected. NOTE: You do NOT need to have the page numbers selected at both the top and the bottom. Once I have the numbers selected, I click on the navigate tool to input a navigate command. In this case, I want ParseHub to go to each page by “clicking” on the selection that I previously made (the page numbers) and I want it to run the same commands that I have already input under the main_template.

I’ve now got all the commands that I want ParseHub to run and I can start my data extraction. To do this, click the green “Get Data” button, “Run Once,” then “Run on Servers.” ParseHub will now extract data and will then send you an e-mail once it is finished. Once it’s done you can go back to the data page and download your extract as either a JSON or CSV file. The time it takes to run an extract depends on the number of pages it’s working through, and if you are using a free account, ParseHub can only go up to a maximum of 200 pages per project. With the dataset I created, I worked through 98 pages in about 10 minutes and ended up with a pretty cleaned up dataset.

ParseHub is definitely an improvement over tools like Import.io, but it does have its limitations. I’ve noticed that the more dynamic a website is, the trickier it is to get ParseHub to automatically recognize patterns of information when you are doing selections. Their website has a bunch of tutorials for how to work with more dynamic websites, but ultimately it is a bit of a learning curve to get things working right. My hope is that the more people use this tool, the more development will go into it and the smoother the experience will become. Right now, we’ve got Tableau as the ultimate visualization tool, Alteryx as the ultimate cleanup tool, and hopefully in the near future, we can potentially create a holy trinity with ParseHub as the ultimate extraction tool.