The task for yesterday afternoon was to take data from https://data.police.uk/ and run it through Alteryx to make it useable in Tableau. Peter has previously written about the work he has done with this dataset. With this API you need to specify both a location (longitude and latitude) and a month and year that you would like to retrieve. In my case I chose the area around my home and replaced the latitude and longitude in the base URL. However, I wanted to get all data available throughout the years for this specific location. These are all set up exactly the same way, with the same column headers just the data for different time periods. There are several ways of doing this but only one that I discovered that is really sensible for this large number of URLs.

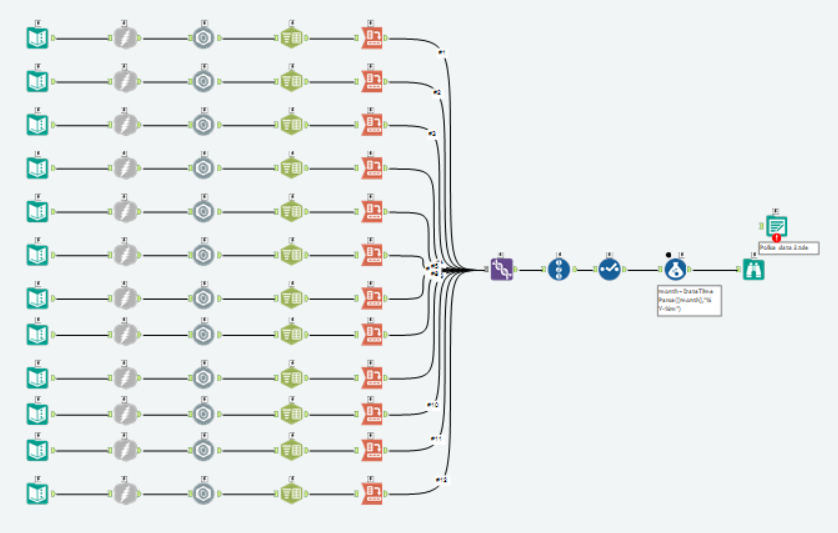

Approach 1

In my first attempt at getting data for multiple months I replicated a part of my workflow and altered each URL separately. Each dataflow retrieved just one month of the data. So even just getting the data for 2015 would take 12 workstreams. Messy and inconvenient.

Approach 2

The reason I only joined the workstreams after the crosstabs tool was because the json name identifier repeats in every dataset and in the crosstabs this then concatenates and merges the data from all the different files. This can actually be avoided by creating a new column that concatenates the year with the json name. When using the crosstab, this new field then distinguishes both between the data sources and the unique cases (numbered 0 to n in each file).

So to query multiple APIs you don’t need to multiply the workstreams, you can just add all the different URLs to the initial text file and then make sure that the workstream includes a formula tool that stops the case name from being duplicated across the datasources. Again though, this involves manually changing the last part of the URL, in this case over 40 times. Still a lot of work.

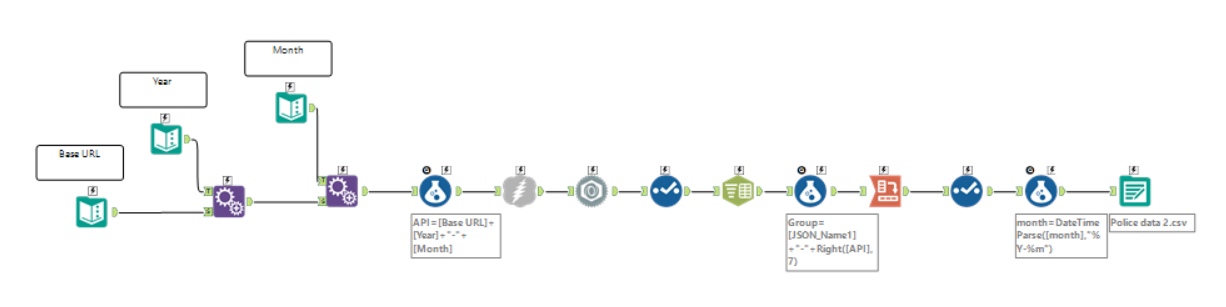

Approach 3

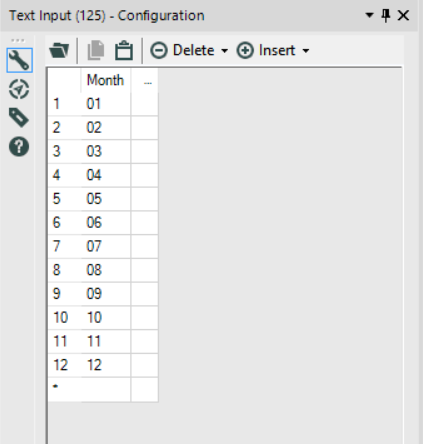

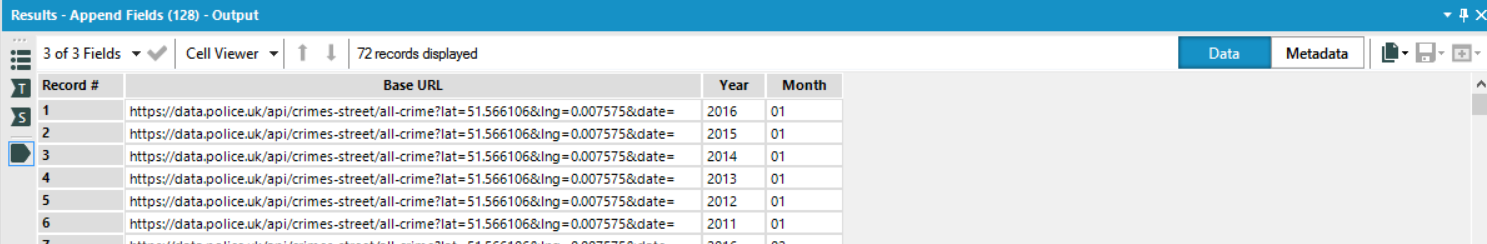

There is a way around this, with text input tools. The first input tool just includes the base URL without any date. The second includes a list of all the years I want to import, the third all the months. These are merged with the Append Fields tool

Example of the month input

These are then added together with the Formula tool ([Base URL]+[Year]+”-“+[Month]) to create the URL that we want, resulting in 72 unique URLs. Now we can continue from here as we did previously, downloading and parsing that json and formatting it with other tools.

If we wanted to add more years all we need to do is alter the text input. This can easily include future years as well. As long as there is no data there these will just return empty but it means that all we need to do to get the newest data is press play every few months, without any tedious copying and pasting.