We’re over the hump of Dashboard Week. 4 days done and 1 more to go. It’s been a long week, but I’ve learnt an awful lot in it. In this blog I thought I’d go back to something I learnt on Monday – when we were in the midst of Parkrun-gate. We were trying to scrape the Parkrun website to get data for our vizzes – this was before we found out that is against their terms of service – and, surprisingly enough, found that we were being summarily blocked from their website. Trying to find a way to stop this happening, Chris Love mentioned that I should try using the Throttle tool. I’d never heard of this before Monday, and on the off chance that you’re in a similar position, here is a quick introduction to this useful tool.

The Throttle Tool

What is it?

The throttle tool is hidden away in the Developer folder along with a host of other lesser used tools. This is a pretty specialised tool. What it does is to limit the speed with which records pass through your workflow. This might sound like a weird thing to want, but there are very particular circumstances when it is an absolute godsend.

Why would you use it?

If you are downloading data from a website straight into Alteryx you will often want to use the throttle tool.If you don’t use it, then you run the risk of overloading the website and even crashing it. You also might be mistaken for a malevolent force attempting a DOS attack, which is a one way ticket to getting blocked. Some websites or APIs will have a rate limit which limits how fast you can download data from them – Twitter’s API for instance is limited to 100 calls an hour. So in order to prevent crashing a website, being blocked and/or hitting your allocated rate limit use a Throttle tool.

The Throttle Tool configuration

How do you use it?

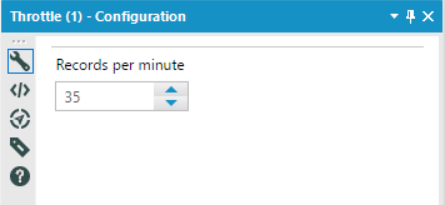

The Throttle tool is unbelievably simple to use. If you drag it into your workflow, then everything downstream (i.e. connected to its output) will be affected by it. To set it up, you simply type in or select the number of records per minute you want to limit your workflow to. And that is all there is to it. I’ve made a simple workflow to show what it looks like in context:

This workflow takes a list of urls and downloads the data found on their pages. The throttle tool is set to 35 records a minute to avoid overloading the website and hitting any rate limits. The downloaded data is then immediately outputted to a .yxdb so I can then go on to parse/clean/structure the data without having to repeatedly hit the website.

So there you have it – a tool with pretty limited scope, but one that can prove invaluable when given its time to shine. Thanks again to Chris for introducing it to me. As always, you can find me on twitter here: @olliehclarke.