The task – to web scrape ASOS new in clothing for men and women.

Problem #1 – Error: Download (1) Error transferring data: Failure when receiving data from the peer

We had to trick ASOS to think that we were people and not scraping. In order to do so we had to go in to the download data Headers and add a name and value: Name: User-Agent, Value: Chrome/47.0.2526.73. This allowed to us to at least get the first page of data were we could begin scraping the data we needed.

Problem #2 – Not reading Andy’s instructions properly

Wasting a lot of time trying to pull all the information about every product in the product details. This was not needed! This caused many problems as different products had different descriptions in different orders and in the end all we needed was an item type e.g. if it was a t-shirt etc. This led to deleting most of the Alteryx workflow and starting again.

Problem #3 – Not understanding the power of ?

I was having a lot of problems with regex being too greedy and taking all the information instead of in my chosen area. The solution -> ?. By adding a ?, regex will stop after the first instance ends. This led to a newer much cleaner workflow with less regex tools. This also allowed us to pull most of the information from the main pages from ASOS which showed 72 items, rather than extracting from each individual page (although we still needed to do this for the item type)

Problem #4 – String Length

Just when I thought I had everything working correctly errors kept coming in and I was unable to download data from each item. This was caused as the length of the URL to download from the regex was restricted to 300 characters and some url’s were longer than this. I frustratingly made the length of the string to 1,000,000 to avoid this happening again! However, this problem did happen again from a formula tool. Lesson – always be aware of the string length!

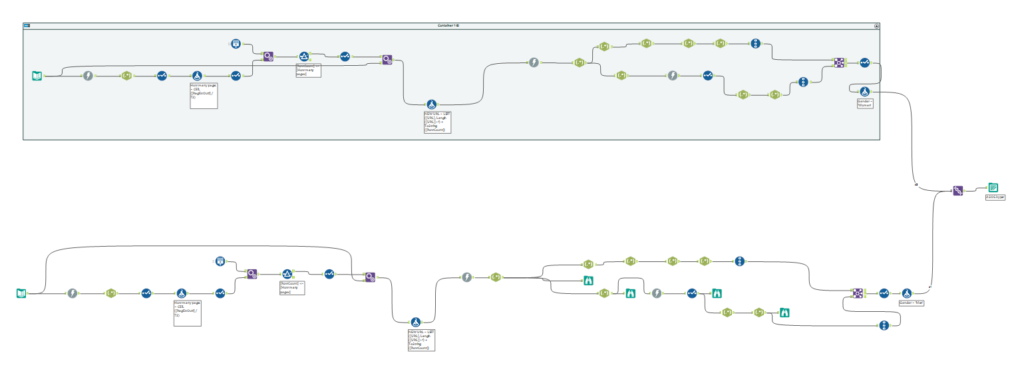

So by well after lunch, the workflow was working to extract the information needed for the first page of new in clothing for men and women. The next step was to extract all the information for all the pages (10+ pages for both). Most people went for a macro for this, but I thought I would be able to do this quicker without a macro and obtain the same results. I managed to get all the data for all men and women but this didn’t really save any time but I found the steps more logical than using a macro. Once we had these together, a simple union for the men and women would give me all my data! Here is how my Alteryx workflow turned out….

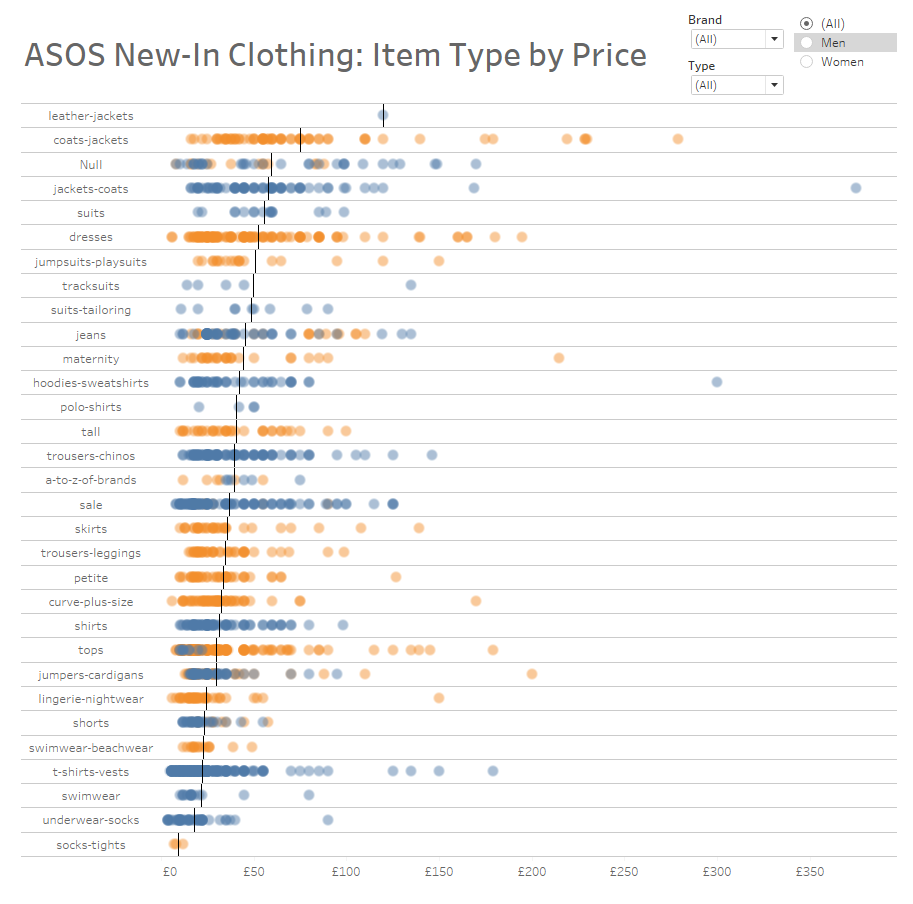

By now it was about 4pm which meant a super quick dashboard and writing this blog post! I quickly created the below dashboard showing how item prices vary according to their type sorted by their average price. Unsurprisingly, leather jackets were the most expensive and socks were the cheapest! Check out the dashboard below:

Overall, many lessons learnt: Bring on tomorrow!