In the last post (which was before dashboard week, so a rather distant last) we discussed how to navigate the wodge of documentation Google have around their Place API. Now, let’s find some geometry!

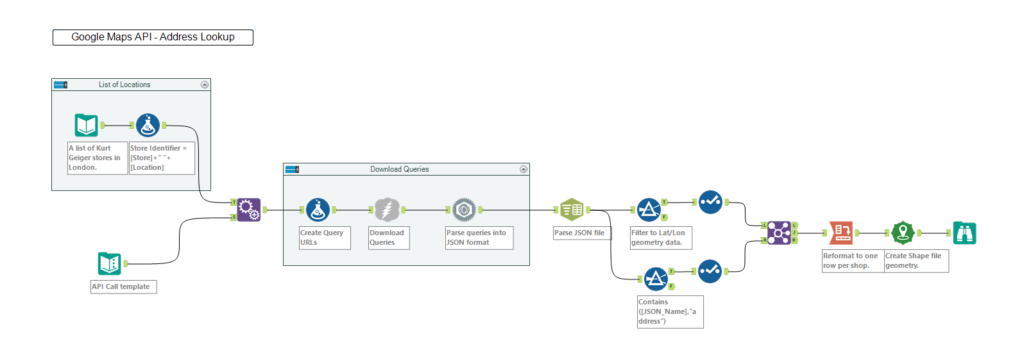

List of Kurt Geiger queries.

Step 3 – What are you looking for?

I’ve made a little list/table of Kurt Geiger locations in London based on how I search for the nearest store when using Google Maps, which can vary between “Kurt Geiger [street name]”, “Kurt Geiger [district]” or “Kurt Geiger [shopping centre]”. This reflects how we tend to search for things in reality and should demonstrate how useful the Places API can be in parsing these disparate descriptors into a set of locations.

Step 4 (Optional) – Test with Postman.

Now, to test the API. We are looking for basic geometry so our query will be quite simple. Nonetheless, I prefer test queries in an app called Postman (which I saw Natasha Kurakina using during a demo) which makes it clear for you to see which parameters you’re using (and indeed, which may be causing errors) as well as what you’re meant to get back, particularly when you’re on a metered connection like a Google API. However, if you’re good at writing queries, then you’ll be fine to stay in Alteryx. We’re only looking for the location latitude and longitude.

It also makes it a lot easier to see how JSON is effectively a bunch of nested lists.

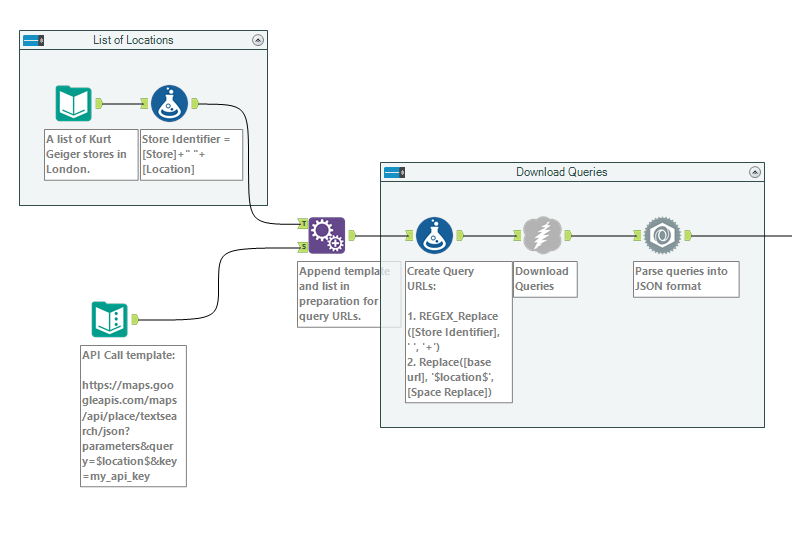

Step 5 – Create Search Queries

Now that you know the basic query you want to run, you need to create your search queries from the table you made earlier and the base query you started with. In Alteryx, this is a few formula tools – replacing spaces with + and the space in the query string (which I mark with ‘$location$’) with those search terms, such as Kurt+Geiger+One+New+Change, which is the nearest shop to the Data School. This should be achievable with just a replace formula, though if you’re looking for something more complex then you can use a regex_replace (as shown in an earlier post). You should end up with something like this;

Step – 6 Run the query!

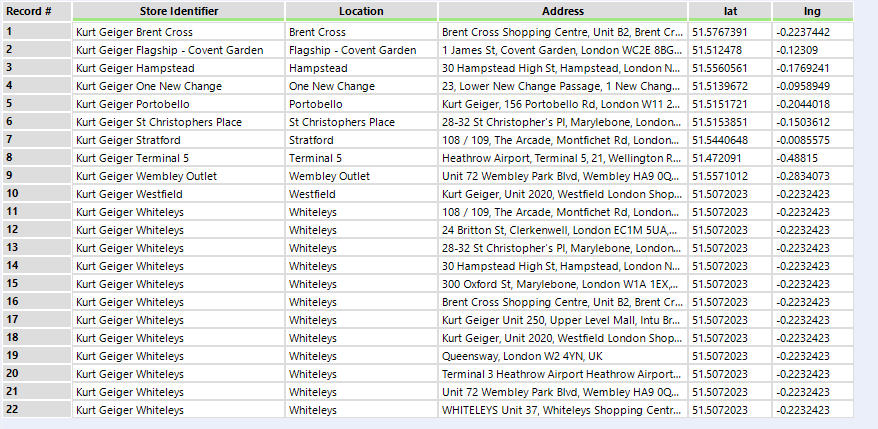

Stick on a download tool and a JSON parse after it, which should parse the JSON name and value fields. Right click on the parse field and click ‘cache and run’, which will run your workflow and hold the output of it locally, so you aren’t making repeated API calls when you run the workflow to iron out later parsing problems and burning through your credit (or any API limits, for that matter, though the latter is DS10’s speciality). Once called, then parse out the results – this may require regex (if you like using it), or you can use text to columns as JSON responses are structured in a way that means this is a viable alternative to regex. Make sure you keep the second number in the name field though, as that is what is connecting the results back to your original query. I parsed and filtered my responses into two sets, to obtain latitude and longitude and to obtain the actual address of the shop, before joining them back together and cross tabbing them in order to create a shape file, which can be put onto a map.

Wait, what happened to Whiteleys? The old shopping centre near Bayswater Road used to have a Kurt Geiger in it, but doesn’t any more. It’s clearly listing all the other shops, but the coordinates are only the same as the one in Westfield, which is the entry above Whiteleys. As the store is closed, the API query returned other Kurt Geiger stores near by as an alternative, but the method which I used to concatenate values for cross tabbing – to use the first for this store location – means that only the coordinates for the closest store (which is in Westfield) was returned. If I was progressing further with my dataset I could take Whiteleys out of my dataset altogether if I’m only interested in open shops, or return to the JSON file and parse out the shop status in addition to the geometry.

You can see the full workflow below;