This past week Carl has been on holiday and Andy has been baking pretty much full-time, so Dan Farmer (@danfarmerTIL) has been teaching us some spatial stuff in Tableau & Mapbox as well as some Alteryx data prep & web scraping techniques. I thought I’d pass on one of his knuggets of knowledge on the latter that I found really useful.

Web scraping involves the extraction of data from websites by fetching/downloading a webpage, before parsing the content into whatever software you are using (in our case, Alteryx) whereby you can manipulate/prep it like you would any other data.

Once the webpage data has been fetched, you need to separate it (e.g by using a row to column tool to delimit) and then filter for the part you want to use (e.g. using filters and regex tools). Finding said “part you want to use” can sometimes be harder than you think, this is where you can use a really easy trick which I will highlight below.

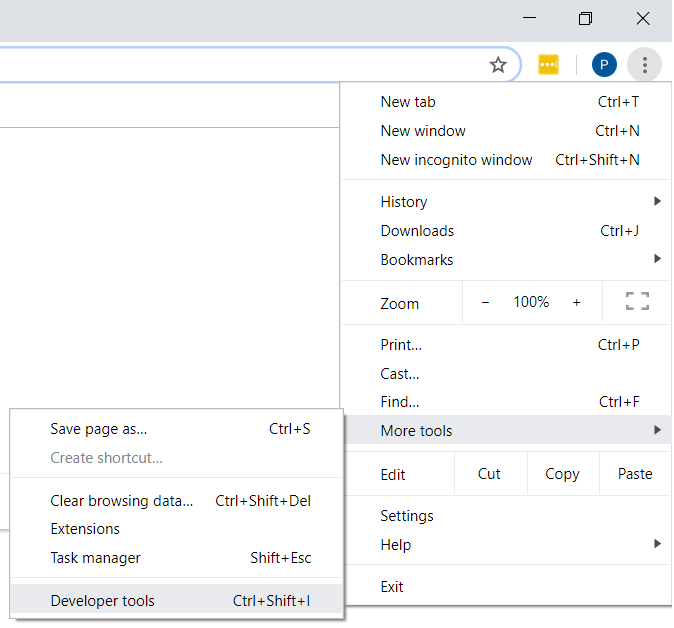

The data scraped from the webpage comes in HTML format and can be viewed in a Google Chrome browser (other browsers are available) by clicking on the following:

3 dots in a vertical line on the top right of Chrome > More Tools > Developer Tools

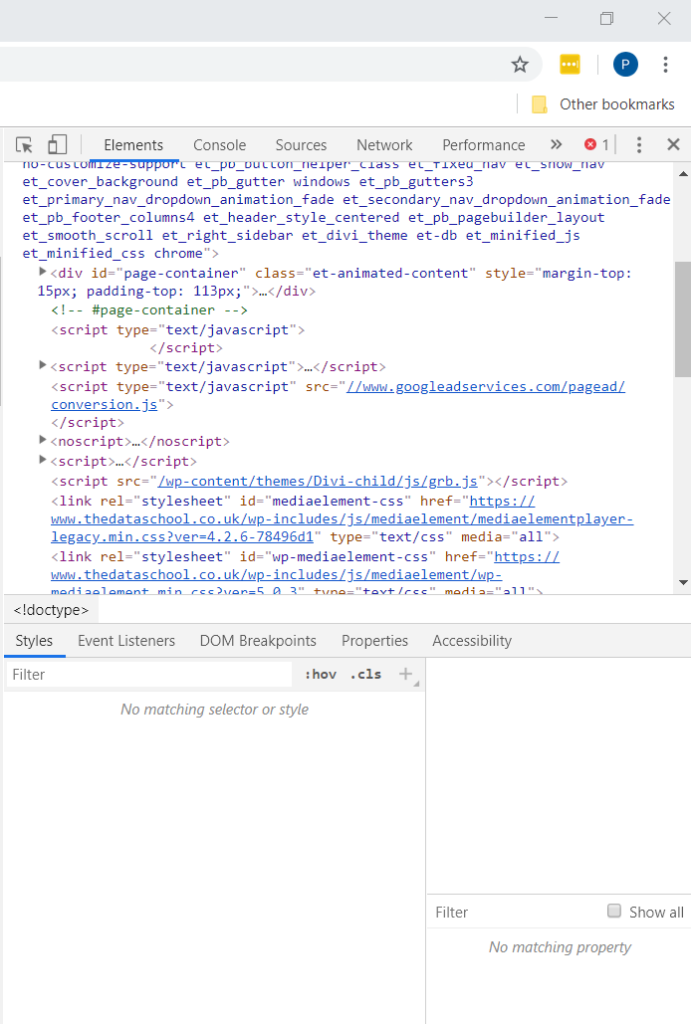

Now you can see the source code of whatever website you are looking at, in this example, The Data School’s homepage.

There is a lot of code here, most of which is not super interpretable for those not well-rehearsed in reading HTML. If only there was a tool which let you identify the particular part of the page you want to see the code for… oh wait, there is!

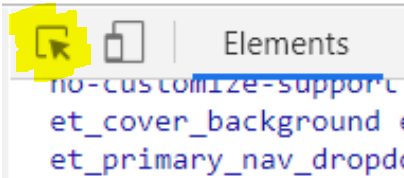

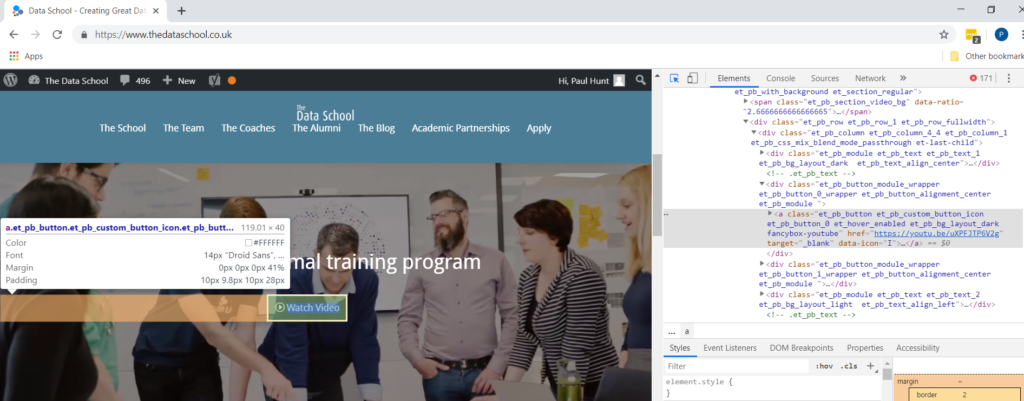

If you click the element inspection button highlighted in yellow (the mouse cursor on a square) in the screengrab above then this allows you to subsequently click on a particular part of the webpage to show you the respective source code.

For example, say we want the code for the video on the homepage of /. We click the element inspection button on the source code window and then click on the “watch video” button on the data school webpage. This then highlights the respective code so that you can quickly work out how you should approach retrieving the target data and avoid wasting (as much) time foraging HTML.

Happy days.