Hello, everyone!

I have to admit, the other DSers were completely right: Dashboard Week is hell. It’s not the hours spent staring at a computer screen, or the fiddling around with floated objects on a dashboard that gets to you. No, it’s the Internet’s infinite amount of rabbit holes. Just when you think you’ve found the perfect data source that will solve all your problems and allow you to build the visualization of the century, you realize that it’s actually all a lie and that the data is actually outdated, incomplete, or simply inexistent. The more time you waste finding a new data source, the later you know you will stay up. At least we’ve reached the halfway mark, and I’m pretty satisfied with what I’ve gotten done so far!

The topic for today was one of my favorites: public transit systems. As a social scientist, I find it fascinating to look for innovative ways to visualize information commonly given to policymakers and decision-makers in massive tables and PDFs. Today was a real treat for that. The work I had to do to get to my dashboard definitely wasn’t – but heck, I learned a lot! Let’s dig right into what I had to do.

Washington Metropolitan Area Transit Authority

Andy originally assigned me the task of building Berlin’s metro system map and visualizing daily ridership. After roughly two hours, however, I realized none of this information was available online and spent the following two hours finding something I could work with. I ended up opting for the WMATA, which offers fantastic sets of APIs that allow you to obtain all types of information ranging from the geolocations of all the stations to the times at which trains leave the stations. You can sign up and get access to all their data yourselves here. I was also thankful to find a data set containing average daily ridership data from a strange website that looked completely fake, but heck, when you’re desperate, you just have to go for it (and I don’t think I got any viruses – yet).

My objectives were the following:

- Build a map of the metro system, with all the stations, and lines connecting them. So yes, a map… of the metro system.

- Figure out which stations are the busiest.

- Build a viz.

- Get some sleep. Haha as if that was going to happen. Fooled you, didn’t I?

As always, this data story is going to start with the same step: Alteryx

Pulling data from an API using Alteryx

If there’s one thing Dashboard week has taught me, it’s how to use APIs. In simple terms, APIs are applications that allow you to make queries to databases hosted by organizations to access their data. To access them, you need an API key which you can apply for free on most online websites, and just to follow the instructions they give you in their documentation.

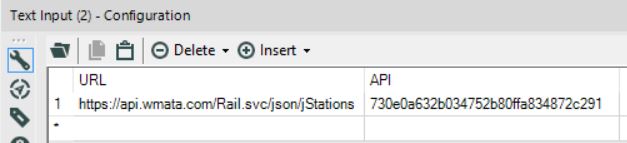

To call an API in Alteryx, you are first going to want to create a Text Input and create two fields: one with the base of the URL you will use your API call, and a second one with the API key (please note there is an infinite amount of ways to do this, this is just my favorite method).

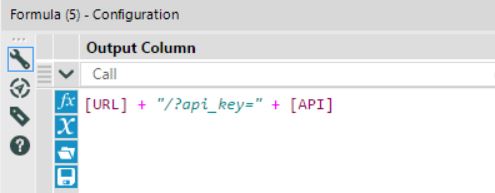

After you’ve done that, you are going to want to use a Formula tool to build the final URL you are going to call in the download tool. The main reason the Formula tool is useful is if you wanted to make many API calls at once: You could replace a piece of the string in the URL you are calling with that field, so that every row would make a different query, allowing you to largely automate the process of making multiple API calls at once.

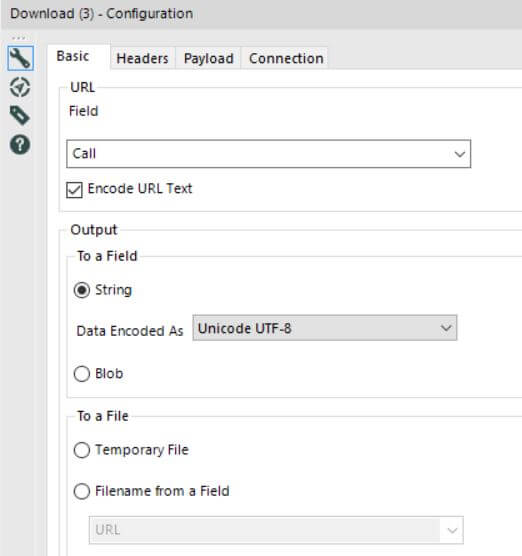

After you’ve built the URL, simply bring in a Download tool and tell it to call the field that has your URLs in.

And voila! That’s all there is to it really. Each API will be different and have its own set of parameters you can use to specify your queries, so just read the documentation provided by the organization you are trying to get data from and adjust what I’ve done here.

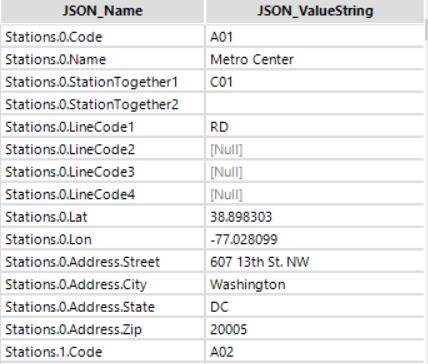

In my case, my query returned a whole bunch of data in the JSON format about each of D.C.’s metro system’s stations, including their latitude and longitudes (Yay!). After simply putting a Json Parse tool after my Download tool, I obtain these two beautiful columns of data. Almost there!

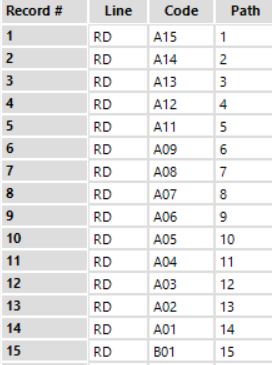

In order to build the lines between each of the stations in Tableau, I’m going to need to build a path. A path is simply a sequence of numbers telling Tableau the order of the stations in a particular line. In the screenshot below, you can see that on metro line RD, station A15 comes first, then A14, then A13, etc.

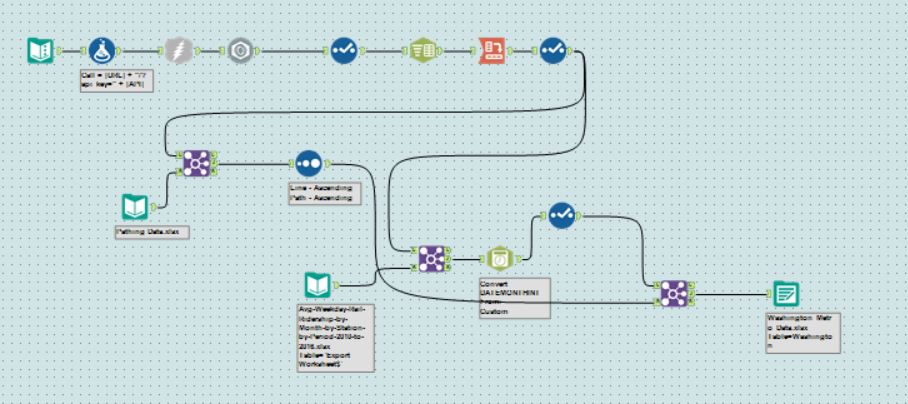

Beyond these two steps – the API calls and the pathing – the rest of my Alteryx work for the day was some simple cleaning, cross tabs, and joins. Here’s what the full workflow I used looks like. Believe it or not, the iterative process of getting these APIs to work took me about 6 hours to build, and this workflow used to be four times as long.

Let’s Visualize Some Metro Data!

Having made sure the data I was going to work with was clean and ready-to-go, the process of actually building my visualization was pretty straight forward. All I needed to do was:

- Add my latitudes and longitudes on the map to build my points

- Build the lines between each of the points

- Put it in a dashboard

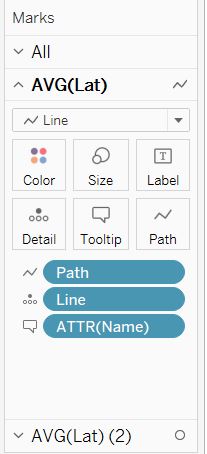

To build the base of the map – the actual metro lines – you first need to add the field that has your lines into detail, switch the mark type to lines, and then add your path field into Path. Here’s what that looks like:

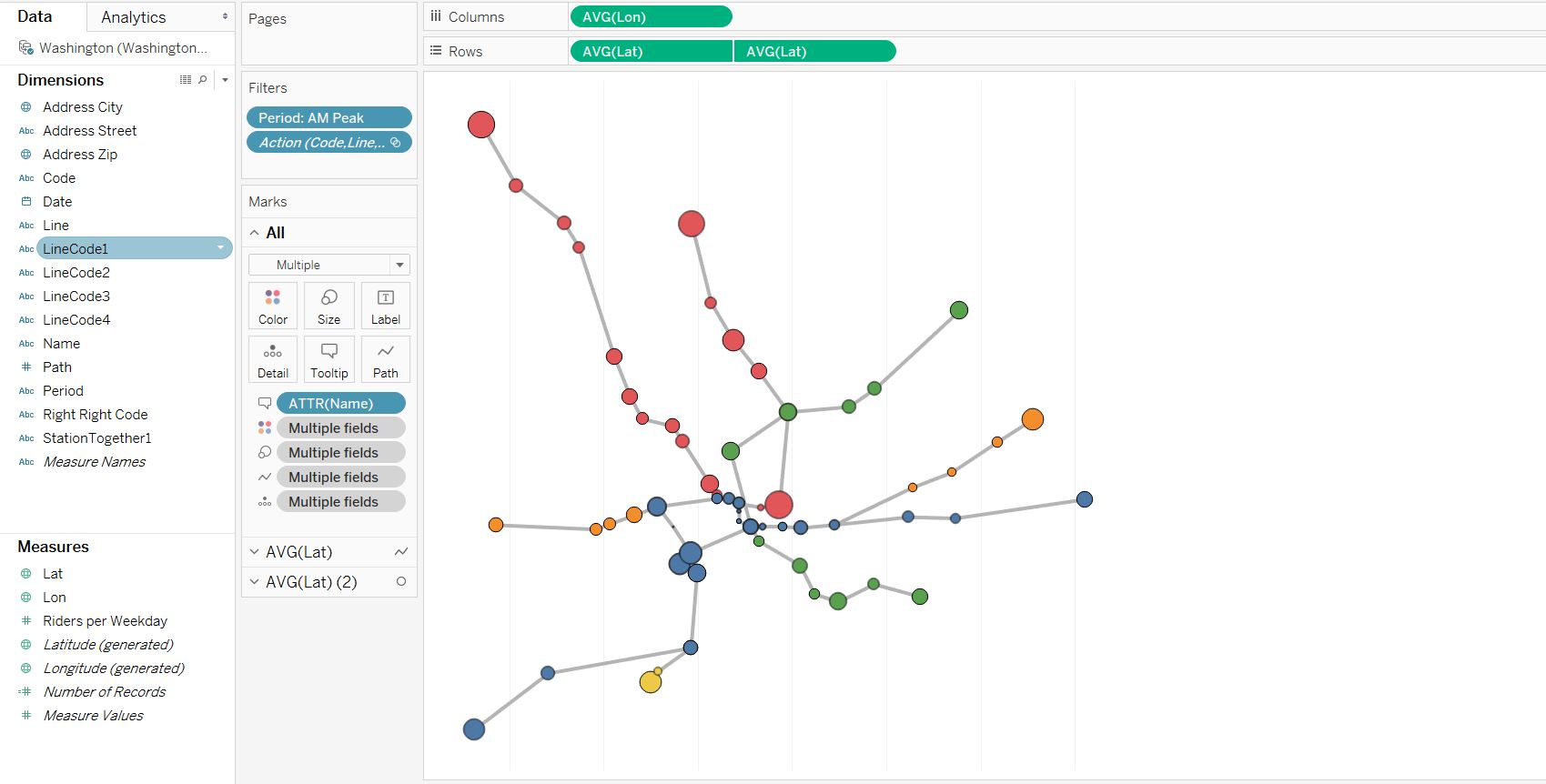

After that, simply add another Latitute pill on your row shelf, dual-axis your maps to lay them on top of one another, and then add your stations onto your new card and switch the mark type to circles. Take a look:

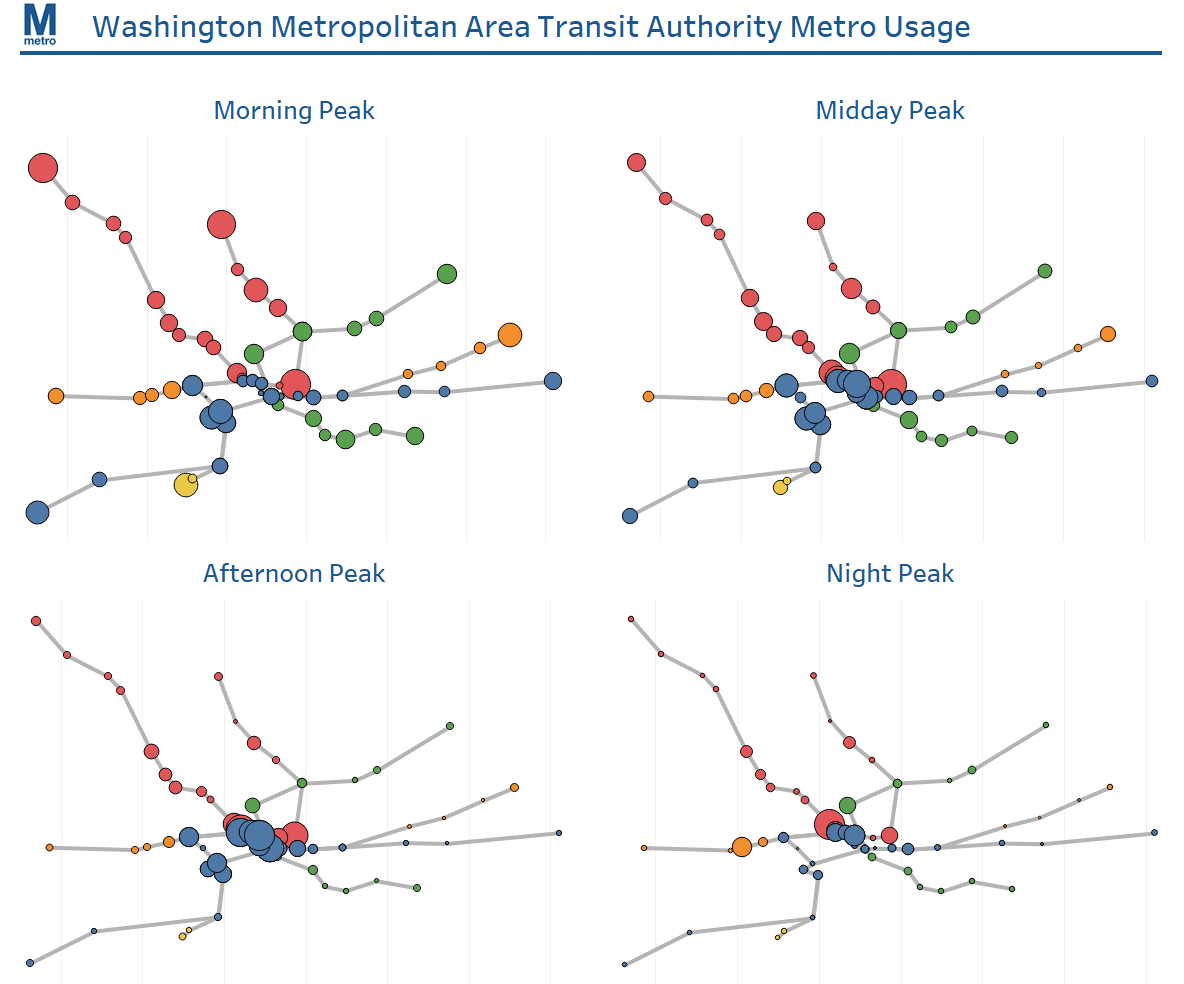

After that was done, I had everything I needed to build my dashboard. Considering how much time it took me to get here, I opted for something very simple and purely aesthetic. I wanted to allow a user to easily be able to identify which parts of the metro are the most used at different times of the day. Thankfully, my data split ridership by the different periods of the day – AM Peak, PM Peak, etc. – so I could use that as a filter.

You can check it out on my Tableau Public profile here. All in all, I’m pretty satisfied with this dashboard, and this day: I’ve never seen the team as distressed and worried as I did today, but I was also amazed by the level of technical challenge we could take head on and vanquish.