First impressions of the first day of Dashboard week.

- Andy seems to be really enjoying this. (And I feel shivers down my spine when I hear him not-so-quietly laughing while looking at tomorrow’s dataset…)

- Narrow down the question. Swim or sink.

We were introduced to a complex dataset (the data dictionary itself was an excel file with 27 sheets!). Too much to chew for a single day working alone. My approach was:

- Spend as much time as needed to understand the data. What is in there, what is not.

- Write down a few questions that, upon checking the data dictionary, I thought would be interesting to analyse.

- Answer the question: what data do I need? Forget anything else.

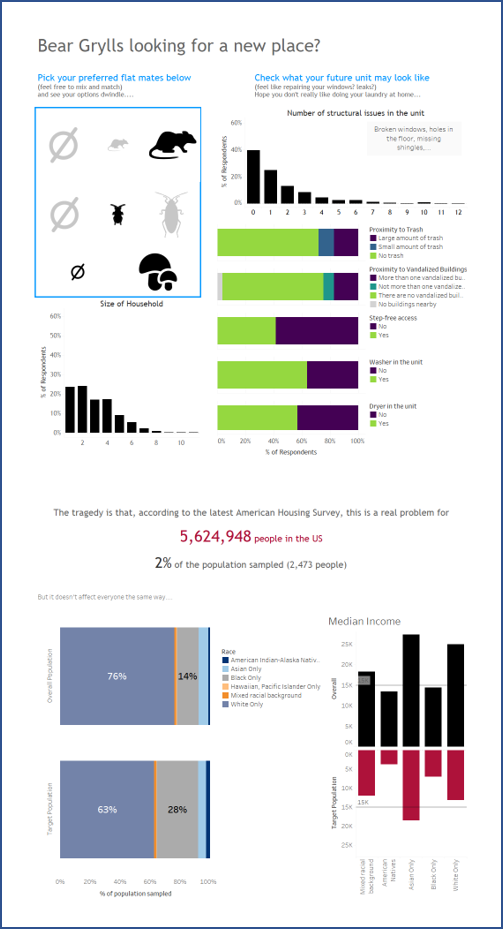

The data was about the American Housing Survey. My first idea was to analyse the distribution of vacant houses, complement the data with other information from the census and find different stories. It soon went sour when I realize not all the information in the data dictionary was actually available in our dataset (some fields have been withheld, probably as they contain PII material)… so, continue with the next question: how bad are the worse housing conditions? Who are the people affected? What else can I think of once I start to analyse the data?

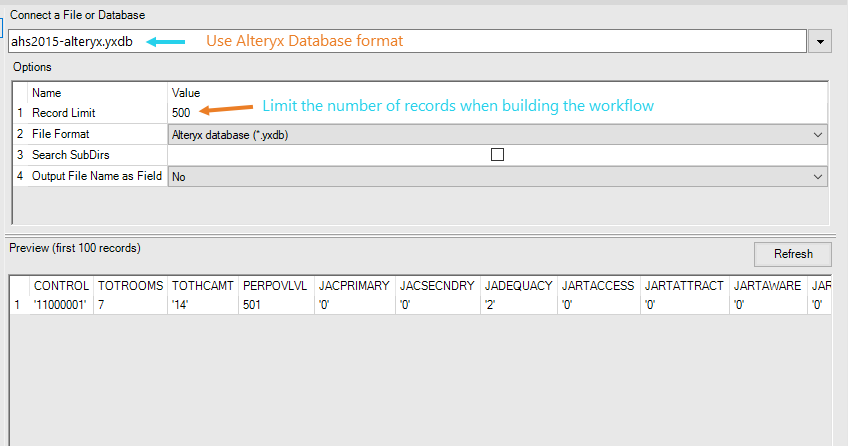

I then dived into Alteryx, but, first: two pre-processing steps.

- convert the data to the alteryx database format(.yxdb). It will save precious minutes every time the workflow is run afterwards (which will happen frequently while building it).

- limit the number of records imported (that “record limit” option) while doing trials so that only a subset is read and processed (remember to reset it at the end!).

Select only what you need and transform the data as needed.

Go back and forth to Tableau – does this work? do I need to change anything?

Once you are good to go… remember to import all records!